![]()

![]()

![]()

![]()

I. Introduction

Le Go, vieux jeu asiatique,

résiste toujours aux machines.Il fait partie de ces jeux où la méthodologie usuelle, à base d'algorithme alpha-beta

et de fonction d'évaluation approchée, ne donne rien de bon.

Forte combinatoire, pas de fonction d'évaluation

naturelle. Depuis 2006, une méthodologie appelée MCTS s'impose comme le meilleur

outil, et s'applique en outre à de nombreux jeux difficiles et à de nombreux

problèmes de planification dans l'incertain.MoGo(TW) est une implémentation de MCTS pour le

jeu de Go.

En 2010, MoGoTW a obtenu la certification Dan par l'association Taiwanaise de Go:

http://mepopedia.com/forum/

En 2010, MoGoTW a gagné la médaille d'or à TAAI 2010 à la fois en Go 9x9, en Go 13x13 et en Go 19x19 !

Détails sur http://ai.csie.ndhu.edu.tw:9898/eng/

II. Algorithmique

MCTS (dont on présente ici la variante la plus connue, UCT) est un algorithme pour prendre des décisions dans des environnements incertains (par exemple, quand on ignore ce que va jouer l'adversaire; mais des incertitudes de type météorologique ou climatique peuvent être envisagées). Ses principes sont:

- un arbre (sous-arbre, stocké en mémoire, de l'immense arbre des possible) grossissant itérativement;

- des simulations aléatoires pour évaluer les feuilles de cet arbre.

Algorithmiquement, dans le cas du Go (on peut généraliser à d'autres jeux et à d'autres problèmes):

Ceci est le pseudo-code d'UCT, décrit plus formellement dans Kocsis et al, 2006. Le premier MCTS remonte

en fait à Coulom 2006. De nombreuses variations ont été formulées, dont

Des extensions au continu (Couetoux et al, 2011), des versions partiellement observables (Auger 2011, Teytaud et al, 2011) ont permis des applications variées (cf section V). MoGo(TW) a obtenu en 2010 le prix de la meilleure contribution aux jeux pour l'année 2009 (chessBase award).

III. Performances contre humains

Le jeu de Go se joue en 9x9, 13x13, et 19x19. Le handicap mesure l'avance que le joueur

le plus fort (ici l'humain) accorde à la machine pour compenser la différence de niveau.

a) Palmarès de MoGo en 9x9: victoires sans handicap

- première victoire contre un pro en 9x9

- première victoire contre un top pro en 9x9 en tant que noir (noir est plus dur; à ma

connaissance on est toujours les seuls à avoir réussi ce type de perfs)

b) Palmarès de MoGo en 13x13: victoires avec handicap modéré

- 2010: première victoire contre un 6D à handicap 2

La première victoire contre un joueur 6D

La première victoire contre un joueur 6D

à handicap 2 en 13x13

- 2011: première victoire contre un pro à handicap 2.5

c) Palmarès de MoGo en 19x19: le chemin est encore long - fort handicap!

- première victoire contre un pro en 19x19 à H9

- première victoire contre un pro en 19x19 à H6

- première victoire contre un top pro en 19x19 à H7 (record égalé depuis, mais non battu):

La première victoire à H7 contre un top pro

(Performance égalée par Pachi récemment:

le groupe J4 est mort et noir gagne)

Notons qu'il y a quelques années les machines perdaient même à handicap 29,

un nombre colossal qui ne fait aucun sens pour les joueuers humains et montre l'immense progrès réalisé depuis, loin d'être explicable par le seul essort des machines.

d) Autres variantes du jeu de Go

(http://teytaud.over-blog.com/

- victoire contre des pros sur un match complet

(3 parties sur 4) en go 9x9 en aveugle, dont 2/2 contre un top pro.

- victoire contre un 6D sans handicap sur des positions aléatoires.

IV. Diffusion & importance du logiciel

- le code MoGo a eu des dizaines de milliers de downloads

==> chessBase award en 2009 pour la plus grande avancée de l'année dans les jeux.

- forte internationalisation (contributeurs sur 3 continents)

- centre d'un MOU entre deux universités asiatiques http://www.nchc.org.tw/en/news/index.php?NEWS_ID=70

- son utilisation de patterns dans le Monte-Carlo a influencé tous les codes existants.

- première utilisation de la technique Monte-Carlo Tree Search sur machine

massivement parallèle (cumulant multithreading et parallélisation à passage de messages); utilisation régulière sur

Grid5000 et optimisation de la bibliothèque d'ouvertures sur grille.

Un des clusters de Grid5000 (cluster de SMP).

V. Au delà du Go

Le MCTS s'applique loin au delà du jeu de Go (voir ici une explication des principes du MCTS). Citons:

- application au power management http://www.lri.fr/~teytaud/

- applications à des problématiques fondamentales d'IA

- applications à des jeux de cartes partiellement observables (IA au meilleur

niveau humain à Urban Rivals www.urban-rivals.com , jeu à 11 millions d'utilisateurs

enregistrés)

Un personnage d'Urban Rivals

MoGoTW, a program based on recent progresses in artificial intelligence (not using alpha-beta but Monte-Carlo Tree Search, see here for other applications of this beautiful techniques), won the first game with handicap 7

against a top professional player.

The graph of the game is here:

and the file of the game can be found here.

MoGoTW is supported by Grid5000, and was here using Huygens cluster. It benefitted also from support by NUTN

It was played in 2009 at Taiwan's open ( http://epochtimes.com/b5/9/2/11/n2425091.htm ).

The opponent is a top professional player, i.e. he is professional, ranked 9P (the highest level),

and recently won a major tournament (the LG Cup 2007):

Left: Arpad Rimmel (MoGoTW's operator).

Right: Chun-Hsun Chou (9Dan Pro player).

bibtex entry:

@inproceedings{winWithH7AgainstPro,

HAL_ID = {inria-00386477},

URL = {http://hal.inria.fr/inria-00386477/en/},

title = { {A}dding expert knowledge and exploration in {M}onte-{C}arlo {T}ree {S}earch},

author = {{C}haslot, {G}uillaume and {F}iter, {C}hristophe and {H}oock, {J}ean-{B}aptiste and {R}immel, {A}rpad and {T}eytaud, {O}livier},

abstract = {{W}e present a new exploration term, more efficient than clas- sical {UCT}-like exploration terms and combining efficiently expert rules, patterns extracted from datasets, {A}ll-{M}oves-{A}s-{F}irst values and classi- cal online values. {A}s this improved bandit formula does not solve several important situations (semeais, nakade) in computer {G}o, we present three other important improvements which are central in the recent progress of our program {M}o{G}o: { {W}e show an expert-based improvement of {M}onte-{C}arlo simulations for nakade situations; we also emphasize some limitations of this modification. { {W}e show a technique which preserves diversity in the {M}onte-{C}arlo simulation, which greatly improves the results in 19x19. { {W}hereas the {UCB}-based exploration term is not efficient in {M}o{G}o, we show a new exploration term which is highly efficient in {M}o{G}o. {M}o{G}o recently won a game with handicap 7 against a 9{D}an {P}ro player, {Z}hou {J}un{X}un, winner of the {LG} {C}up 2007, and a game with handicap 6 against a 1{D}an pro player, {L}i-{C}hen {C}hien.},

language = {{E}nglish},

affiliation = {{M}aastricht {U}niversity - univ. {M}aastricht - {TAO} - {INRIA} {S}aclay - {I}le de {F}rance - {INRIA} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}aris {XI} - {TAO} - {INRIA} {F}uturs - {INRIA} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}aris {XI} - {L}aboratoire de {R}echerche en {I}nformatique - {LRI} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}aris {XI} },

booktitle = {{A}dvances in {C}omputer {G}ames },

publisher = {{S}pringer },

address = {{P}amplona {S}pain },

audience = {international },

year = {2009},

URL = {http://hal.inria.fr/inria-00386477/PDF/peacg.pdf},

}

SMP clusters form NUTN (left) and

Grid5000 (right)

Ping-Chiang Chou

(5 Dan pro Go player)

Below the summary of games between computers and humans

in SSCI 2011; SGFs are here: http://ssci2011.nutn.edu.tw/

and Go from random initial board report is http://www.lri.fr/~teytaud/

and blind-Go report is http://www.lri.fr/~teytaud/

Pachi and MoGoTW are running on clusters of SMPs; MoGoTW and been supported by the Grid5000 project (www.grid5000.fr). It is based on a work in the Tao team (lri, inria),

completed by a joint research project with the National University of Tainan (Taiwan).

Vous partez de zéro et vous voulez devenir un expert en optimisation ? Il y a du travail :-)

Ci-dessous une bibliographie (juste deux livres) sur le sujet, pour avoir les bases:

Une bibliographie de theorie de l'apprentissage et réseaux de neurones et un peu d'apprentissage par renforcement.

Ci-dessous donc la liste de mes bouquins favoris sur le sujet:

Inclut notamment l'apprentissage actif.

Inclut notamment l'apprentissage actif.

Comme son titre l'indique, l'ouvrage de référence lorsque l'approximation de fonction est dédiée à des problèmes de renforcement (jeux, contrôle).

Comme son titre l'indique, l'ouvrage de référence lorsque l'approximation de fonction est dédiée à des problèmes de renforcement (jeux, contrôle).

The 20th of July, 2010, at WCCI 2010 in Barcelona, some games were played by boths against humans.

This page is centered on the games played by MoGo and MoGoTW, but I give also a fast overview of other game.

A main novelty is the presence of 13x13 games. MoGo and MoGoTW are supported by the Grid5000 project.

There are not so many games against strong humans and computers in 13x13; I guess the game below is the first ever win of a computer against a 6D human in 13x13 Go with handicap 2:

This game was won by MoGo playing on 15 octo-cores. As usual, the bot gave away some points at the end, and won just by 0.5 whereas winning by more points was easy.The SGF file can be found here:

http://www.lri.fr/~teytaud/ARCHIVE_BARCELONA/firstEverWinAgainst6D_with_Handicap2_in_13x13.sgf

MoGo won the next game with H2 against another 6D player, Shang-Rong Ts

ai, also in 13x13:

At this point, MoGo (black) used a threat in B2; white can only win the ko (F19) by playing in the upper part (G11), otherwise black wins the game by killing the big white group H13; so white does not reply to the threat, MoGo captures the lower left corner and black (MoGo) wins. This shows that bots can sometimes play well ko fights.The complete game

can be found here:

Incidentally, MoGo won a 9x9 game against a 9P as white:

The pro said that move 28 was a very good move, necessary for winning this game. This makes MoGoTW the first ever bot which won against a pro both a black and as white. The game can be found here:

http://www.lri.fr/~teytaud/ARCHIVE_BARCELONA/gameWonIn9x9AsWhiteAgainst9P.sgf

On the other hand, MoGo lost as black, in spite of an opening very close to a past win

against a 9P player:

Other bots:

Paper on technical tools for this: http://hal.archives-ouvertes.fr/inria-00544622/en/

bibtex entry:

@article{RIMMEL:2010:INRIA-00544622:1,

HAL_ID = {inria-00544622},

URL = {http://hal.archives-ouvertes.fr/inria-00544622/en/},

title = { {C}urrent {F}rontiers in {C}omputer {G}o},

author = {{R}immel, {A}rpad and {T}eytaud, {O}livier and {L}ee, {C}hang-{S}h

ing and {Y}en, {S}hi-{J}im and {W}ang, {M}ei-{H}ui and {T}sai, {S}hang-{R}ong},

abstract = {{T}his paper presents the recent technical advances in {M}on

te-{C}arlo {T}ree {S}earch for the {G}ame of {G}o, shows the many similarities and the rare differen

ces between the current best programs, and reports the results of the computer-{G}o event organized

at {FUZZ}-{IEEE} 2009, in which four main {G}o programs played against top level humans. {W}e see th

at in 9x9, computers are very close to the best human level, and can be improved easily for the open

ing book; whereas in 19x19, handicap 7 is not enough for the computers to win against top level prof

essional players, due to some clearly understood (but not solved) weaknesses of the current algorith

ms. {A}pplications far from the game of {G}o are also cited. {I}mportantly, the first ever win of a c

omputer against a 9th {D}an professional player in 9x9 {G}o occurred in this event.},

language = {{A}nglais},

affiliation = {{D}epartment of {C}omputing {S}cience - {D}epartm

ent of {C}omputing {S}cience, {U}niversity of {A}lberta - {TAO} - {INRIA} {S}aclay - {I}le de {F}ran

ce - {INRIA} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}aris {XI} - {L}aboratoire de

{R}echerche en {I}nformatique - {LRI} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}ar

is {XI} - {D}epartment of {C}omputer {S}cience and {I}nformation {E}ngineering - {NUTN} - {N}ational

{D}ong {H}wa {U}niversity - {NDHU} - {NDHU} - {D}epartment of {I}nformation {M}anagement - {CJCU} }

,

pages = {in press },

journal = {{IEEE} {T}ransactions on {C}omputational {I}n

telligence and {A}rtificial {I}ntelligence in {G}ames },

audience = {internationale },

year = {2010},

URL = {http://hal.archives-ouvertes.fr/inria

-00544622/PDF/ct.pdf},

}

How complex is your game ?

A usual tool for answering such a question is computational complexity.

It consists in finding where the problem is, in the following scale:

The classes on the right are more difficult.

For the most important classes:

- PL is the class of problems solvable in poly-logarithmic time;

- P is the class of problems solvable in polynomial time

- PSPACE is the class of problems solvable with polynomial memory

- EXP is the class or problems solvable in exponential time

- EXPSPACE is the class of problems solvable in exponential space.

Given a position, how "powerful" should be your computer, if you want it to find if this situation, in case of perfect play,

is a win for the player to play.

We will here consider only deterministic (i.e. non randomized - this is not "determinism" in the sense of P vs NP) games.

Zero-sum two-player games, existence of strategies winning with proba > c:

the surprising thing is that, in the partially observable case, even on a finite board (finite state space), the problem is undecidable. If c<1 and c>0, then the problem is undecidable. How is it possible ? The trick is that usually people consider this case as decidable, because they consider the problem of the existence of a winning strategy independently of what is played by the opponent. This makes the problem much simpler, as in particular the opponent does not have to be clever; he can just play randomly, independently of observations, as it is sufficient for him to win with proba >0.

Importantly, this questions is probably the most natural one. We now consider the easier cases in which we look for a forced win. But keep in mind that the natural case is probably more the case above.

Remark: the undecidability certainly not holds in the fully observable case, which is immediately downgraded to the same case as the existence of winning strategies whatever may be the choice of the opponents, below.

Zero-sum two-player games: the existence of winning strategies whatever may be the choice of the opponents

Consider a game which is encoded in succinct form; this means that the game is given as a simple automaton,

updating variables depending on some rules.

Then, the complexities are as follows:

- deciding whether the situation is a forced win, if everything is observable, is EXPTIME-complete;

- deciding whether the situation is a forced win, if nothing is observable (you have no info!) is EXPSPACE-complete;

- deciding whether the situation is a forced win, if the situation is partially observable, is 2EXP-complete.

==> Rintanen's paper is the main reference in this case.

The game of Go with japanese rules is fully observable; it is EXPTIME-complete. It is therefore as complicated as it is possible for a fully observable game that can be described in succinct form. This is proved in Robson's impressive paper which exhibits a complicated ko-fights whose results decides the issue of a connection problem.

And what if the game is limited in length, to an exponential length ? still the existence of winning strategies, whatever may be the choice of the opponents

Things are much easier in this case:

- the fully observable case is EXPTIME in the fully observable case (no change!);

- the no-observability case is NEXPTIME (much easier);

- the partially observable case is EXPSPACE, which is easier than 2EXP.

This was shown in papers by Mundhenk et al.

And what if the game is limited in length, e.g. an acyclic graph is given and the game is played on the graph, i.e. at most a linear number of possible states ?

The fully observable case then becomes linear (solving by minimax).

I'm not aware of results in the partially or unobservable case, please tell me if you know (olivier.teytaud@gmail.com).

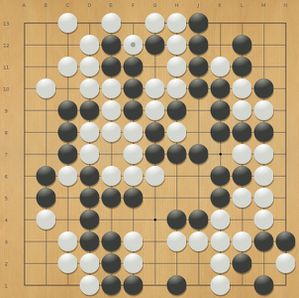

This page is only understandable by go players.

I'm interested in the game of Go as it's a good testbed for computer science - whereas in chess computers are stronger

than humans (by far), computers are still weak in the game of Go. In particular, they sometimes make errors in ladders.

Below, a beautiful ladder problem, generated by computer scientists.

I don't give the reference, so that nobody can cheat by looking at the answer,

I hope that the authors of this ladder will forgive me :-)

If you have comments on this, please email me olivier.teytaud@gmail.com (in particular if you try to solve it).

Question: black plays. Can black kill the left white stone marked with a triangle ?

High quality version of the image: http://www.lri.fr/~teytaud/goladder.jpg

On me demande régulièrement de citer d'autres applications de la technologie MCTS que le jeu de Go; voici donc un bref résumé.

On trouvera une présentation de la technologie MCTS ici:

http://teytaud.over-blog.c

1) En restant dans le domaine des jeux:

La technologie dite Monte-Carlo Tree Search (MCTS; on parle parfois aussi de "Upper Confidence Trees") est appliquée avec beaucoup de succès sur des jeux difficiles, où l'humain est traditionnellement bien meilleur que la machine: Hex, Havannah, sont des exemples.

Elle est aussi très efficace pour jouer sur la simple base de règles, sans développement spécifique des jeux: ainsi

MCTS gagne toutes les compétitions de "jeu général", c'est-à-dire les compétitions où les règles sont fournies sous format informatique aux programmes, qui ignorent à l'avance à quel jeu ils vont jouer. Ainsi, les programmes peuvent s'adapter directement à de nombreux jeux, y compris des variantes originales de Go.

2) Complètement en dehors des jeux

Notre équipe a réalisé avec succès (avec Carnegie Mellon university) une application à l'optimisation sur des graphes, avec notamment le cas industriel de l'optimisation de librairie de calcul linéaire (http://hal.inria.fr/inria-

Nous démarrons par ailleurs un projet dans l'énergie, conjointement avec la société Artelys; il s'agit de comparer MCTS et les techniques usuelles pour le choix de gestion d'un parc de production électrique. L'application est très préliminaire, mais les essais sur des cas tests de dimension comparable sont encourageants.

Nos confrères à Maastricht ont réalisé des applications à la gestion de stocks: en effet, choisir une action sur un stock en ayant des incertitudes sur ce qui peut entrer par ailleurs dans le stock (et à quel prix) est très proche de poser une pierre sur un goban

en ignorant où l'adversaire va poser sa pierre.

Nous avons des applications de type fondamental, appelées optimisation non-linéaire chère et apprentissage actif cher. Ces méthodes, pas encore directement utilisées dans l'industrie, donnent de bons résultats sur des cas tests; les domaines touchés possiblement sont tous ceux où choisir quel prototype construire, lorsqu'il est long et/ou cher de prédire l'efficacité du prototype; les industries automobile, aérienne, spatiale par exemple.

Globalement, tout le champ du contrôle en temps discret, sur un nombre de pas de temps modéré et possiblement en très grande dimension, est concerné. Le jeu de Go est dans une fourchette idéale, avec une grande dimension (>300 actions possibles en début de partie) et un nombre de pas de temps modéré (une partie peut difficilement dépasser 400 coups); nous sommes là dans un registre mal traité par les techniques classiques, couvrant un grand nombre de problèmes, et où MCTS apporte beaucoup.

A paper on continuous UCT: http://hal.archives-ouvertes.fr/hal-00542673/en/

Bibtex entry:

@inproceedings{COUTOUX:2011:HAL-00542673:1,

HAL_ID = {hal-00542673},

URL = {http://hal.archives-ouvertes.fr/hal-00542673/en/},

title = { {C}ontinuous {U}pper {C}onfidence {T}rees},

author = {{C}ouёtoux, {A}drien and {H}oock, {J}ean-{B}aptiste and {S}okolovska, {N}ataliya and {T}eytaud, {O}livier and {B}onnard, {N}icolas},

abstract = {{U}pper {C}onfidence {T}rees are a very efficient tool for solving {M}arkov {D}ecision {P}rocesses; originating in difficult games like the game of {G}o, it is in particular surprisingly efficient in high dimensional problems. {I}t is known that it can be adapted to continuous domains in some cases (in particular continuous action spaces). {W}e here present an extension of {U}pper {C}onfidence {T}rees to continuous stochastic problems. {W}e (i) show a deceptive problem on which the classical {U}pper {C}onfidence {T}ree approach does not work, even with arbitrarily large computational power and with progressive widening (ii) propose an improvement, termed double-progressive widening, which takes care of the compromise between variance (we want infinitely many simulations for each action/state) and bias (we want sufficiently many nodes to avoid a bias by the first nodes) and which extends the classical progressive widening (iii) discuss its consistency and show experimentally that it performs well on the deceptive problem and on experimental benchmarks. {W}e guess that the double-progressive widening trick can be used for other algorithms as well, as a general tool for ensuring a good bias/variance compromise in search algorithms.},

language = {{A}nglais},

affiliation = {{L}aboratoire de {R}echerche en {I}nformatique - {LRI} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}aris {XI} - {TAO} - {INRIA} {S}aclay - {I}le de {F}rance - {INRIA} - {CNRS} : {UMR}8623 - {U}niversit{\'e} {P}aris {S}ud - {P}aris {XI} - {C}hercheur {I}nd{\'e}pendant - {A}ucune },

booktitle = {{LION}'11: {P}roceedings of the 5th {I}nternational {C}onference on {L}earning and {I}ntelligent {O}ptimizatio{N} {LION}'11: {P}roceedings of the 5th {I}nternational {C}onference on {L}earning and {I}ntelligent {O}ptimizatio{N} },

pages = {{TBA} },

address = {{I}talie },

audience = {internationale },

day = {31},

month = {01},

year = {2011},

URL = {http://hal.archives-ouvertes.fr/hal-00542673/PDF/c0mcts.pdf},

}